The Best MCP Servers in 2026

Most AI agents don’t fail because of prompts-they fail because of orchestration, schema drift, retry loops, and state divergence. This guide breaks down the MCP servers, transport layers, and coordination infrastructure required to move from demos to production systems.

The Best MCP Servers in 2026: Why Most AI Agents Fail at the Coordination Layer

| Server Type | Best Use Case | Maturity | Primary Transport |

| Smithery | Team Tool Management | High | SSE / Docker |

| n8n MCP | Human-in-the-Loop Ops | High | Webhook / SSE |

| Postgres MCP | Structured Data Queries | Medium | Stdio / SSE |

| Filesystem/SQLite | Local Coding Assistance | High | Stdio (Local) |

| Custom SSE Proxy | Private Auth & Security | Medium | SSE |

Most teams eventually discover that adding tools to an AI agent is the easy part. The harder problem-and where most production deployments fail-is keeping state synchronized across those tools under load. While the Model Context Protocol (MCP) helps standardize how agents talk to external data, it also introduces a new class of coordination and debugging problems.

This guide moves past the plugin hype to look at the actual infrastructure required to make AI agents reliable enough for production.

The Executive Reality Check

-

The State Gap: Adding tools is easy; keeping tool output and model reasoning synchronized under load is the real bottleneck.

-

The Reasoning Tax: Every tool definition consumes prompt space, which can degrade a model’s focus before it even starts processing user intent.

-

Transport Limits: Stdio is for local prototypes. Any agent serving multiple users or running in the cloud requires an SSE (Server-Sent Events) or Gateway architecture.

-

Schema Fragility: Minor version drift in an MCP server often results in silent, non-deterministic reasoning failures.

-

Coordination over Prompts: In production, engineering effort shifts from writing prompts to managing retries, auth, and state tracing.

The Zero-Click Answer

In 2026, the best MCP setup for developers is the SQLite/Filesystem pair via uvx for local tasks. For enterprise scale, the standard is a managed MCP Gateway (like Smithery) or a self-hosted n8n instance. These provide the necessary human-in-the-loop triggers and VPC isolation required to move past simple chat demos into reliable production workflows.

A Production Story: The Retry Failure

In several prototypes and experiments we ran, we hit a failure loop that no amount of prompt engineering could fix. The orchestrator successfully replayed an MCP tool call after a network timeout, but the model had already generated a reasoning path based on the initial failed response.

Even though the second, successful payload finally arrived, the model didn’t update its conclusion. It anchored to its own explanation of the failure. This is the reality of state divergence: once the conversation history and the tool’s actual output drift apart, the agent is essentially roleplaying consistency rather than solving a problem. Some of these orchestration bugs were maddeningly inconsistent under load.

1. The Reasoning Tax: The Cost of Tool Overload

Tool definitions are not a free lunch. Every MCP server you connect adds a “reasoning tax” to your context. We’ve observed that as the available toolset grows, the model’s focus shifts from solving the query to managing the overhead of its own schemas. This is where context engineering becomes more important than simple prompting.

Where Things Start Breaking

In experiments we ran, routing quality degraded noticeably once the available toolset became large enough that schemas dominated the context window.

| Tools Loaded | Estimated Token Bloat | TTFT Impact | Routing Reliability |

| 1-5 | < 2k tokens | Negligible | High |

| 10-15 | 3k – 5k tokens | ~200ms increase | Consistent |

| 20+ | > 8k tokens | > 500ms increase | Noticeably Inconsistent |

The Simplification Bias:

Models also tended to prefer simpler tools, even when those tools were obviously the wrong fit. For example, our agents would repeatedly favor a basic SearchFiles tool over a more complex Enterprise_CRM_Query just because the schema was shorter and easier for the model to parse. Ironically, richer tool ecosystems often reduce overall capability because the model spends its token budget navigating the toolset rather than reasoning. This often contributes to the end of cognitive debt turning into a new kind of technical debt.

2. Moving from Stdio to SSE

The choice of transport determines how quickly you hit an operational ceiling. Stdio (Standard Input/Output) is the default for AI coding assistants like Cursor and Windsurf, but it’s fundamentally a single-user bridge. It breaks down quickly once agents leave the developer workstation.

We eventually had to add per-tool timeout budgets because some slower MCP servers were causing cascading queue buildup during multi-step workflows. The fix wasn’t sophisticated-we just stopped allowing long-running tools to block the orchestration loop.

SSE (Server-Sent Events) worked well for us until auth delegation entered the picture. Then things got messy. We hit a recurring issue with schema changes between MCP server versions. A tool that returned a nullable field in v0.3 would silently omit it in v0.4. This caused downstream failures as the model tried to “guess” data that it previously relied on, leading to AI hallucinations that were hard to trace in complex RAG systems.

3. Recommended MCP Servers for 2026

Smithery: Team-Wide Management

Smithery has become the go-to for teams needing a managed tool registry and containerized infrastructure.

-

The Reality: It solves dependency hell by wrapping tools in Docker containers.

-

The Catch: If your agent isn’t hitting the tool frequently, container cold-starts can spike latency enough to trigger model timeouts. It is a critical component in any AI agent builder software.

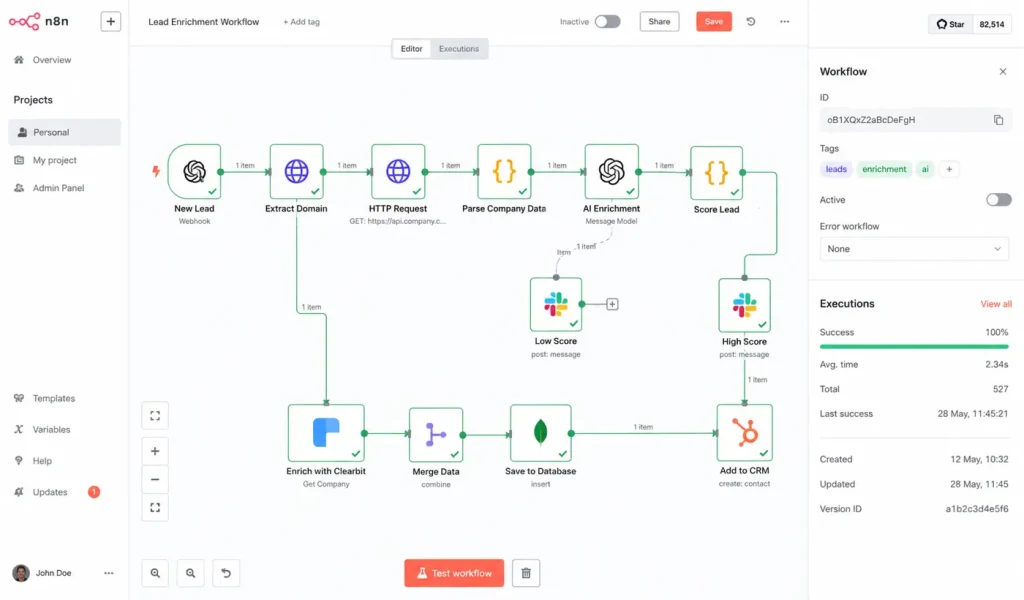

n8n MCP: Handling High-Stakes Actions

Many teams are replacing Zapier with n8n because it allows for native “Human-in-the-loop” triggers via MCP.

-

The Deployment Lesson: This is the most reliable way to handle actions like moving money or updating production configs. The agent triggers the tool, but the tool “hangs” until a human approves the workflow in the n8n UI. It acts as a safety valve for AI-driven automation.

Postgres & SQLite MCP: Beyond Semantic Search

If your agent needs to know “how many units are in stock,” don’t use traditional retrieval. Use a SQL MCP server.

-

The Technical Pain: We saw models repeatedly misuse foreign keys in larger schemas, especially when table names were semantically similar. Flattened reporting views performed much better than exposing normalized production schemas directly. This is a primary use case for vector databases and structured SQL bridges.

4. Deployment Sequence

-

Inventory (Week 1): Audit your agents and identify the 3 tools they use most. Prune the rest to lower the reasoning tax.

-

Transport Migration (Week 2): If your agent is running in a shared cloud environment, migrate from Stdio to an SSE bridge.

-

Governance (Week 3): Implement per-tool timeouts and a retry policy that forces the model to re-evaluate history. Check this against an AI reliability engineering framework.

-

Observability (Week 4): Set up a dashboard specifically to monitor tool routing failures and schema mismatches.

FAQ: Implementation Realities

Should I use Stdio or SSE?

If you’re building a tool for yourself in Cursor, Stdio is fine. For anything that needs to serve multiple users or run in a cloud environment, you need SSE or a gateway.

How many tools is “too many”?

Reliability usually starts to dip after 10 tools. By the time you hit 25, tool selection becomes noticeably inconsistent unless you aggressively prune your schemas. If you need a huge library, use context engineering to only show the model the tools it needs for the current step.

Why does my agent keep ignoring its tools?

It’s usually a schema or description problem. If a tool’s description is vague, the model will default to its internal knowledge. Be hyper-explicit about exactly when the tool must be used to avoid agent memory failures.

How do you handle authentication for remote servers?

Don’t pass raw API keys to the model. Use an MCP gateway that handles credential masking and session-based auth delegation.

The Verdict: Most of the engineering work ended up going into orchestration, retries, auth propagation, and tracing-not the prompts themselves. If you aren’t architecting for state divergence and the context overhead, you’re building a prototype, not a production system.

How are you planning to handle authentication for your remote MCP servers?